Let me start with an uncomfortable number. In April 2026, 62% of US retail investors admitted they now use AI to inform their investment decisions. Two years ago that number was 13%. Adoption is vertical, and the forums are full of traders asking the same question: should I build my own Machine Learning trading signals?

Here’s the part nobody’s saying out loud. The honest literature on whether these signals actually survive contact with live markets is grim. This guide walks you through when machine learning beats a simple rule, what building an ML signal really costs in 2026, and how a retail trader should approach the whole thing without torching an account in the process.

Key Takeaways

- 62% of US retail investors now use AI to inform trades, up from ~13% two years ago.

- A study of 888 real trading algorithms found backtest Sharpe ratios correlate with live performance at R² below 0.025, essentially noise.

- H100 cloud GPU pricing collapsed ~64% in twelve months, making ML research accessible to retail for the first time.

- Data prep still consumes ~45% of a data scientist’s time. An ML edge usually comes from features and data, not model choice.

Why Is Everyone Suddenly Building Machine Learning Trading Signals?

The global algorithmic trading market is projected to grow from $28.47B in 2025 to $99.74B by 2035 at a 13.16% CAGR. That’s the top-line number. The real story is what happened to the inputs (compute, data, and research tools) over the last eighteen months. So why now? Three things collided at once. Cloud GPUs got cheap. Alternative datasets opened up to smaller buyers. And large language models made research workflows ten times faster.

Start with compute. In late 2024, renting an NVIDIA H100 cost around $8 per hour. By April 2026, hyperscaler averages landed between $2.85 and $3.50 per hour, with budget providers quoting $1.49 to $1.99 per hour. AWS alone announced a 44% cut on its p5 instances in June 2025. That’s roughly a 64% drop in twelve months for the single most important piece of ML infrastructure.

Retail adoption followed the price curve. eToro’s Retail Investor Beat pegged US AI-for-trading usage at 30% in October 2025, a 75% year-over-year jump. Six months later, that figure hit 62%. The dataset matters: a 938-respondent retail survey in April 2026 from Investing.com confirmed nearly two-thirds of American retail investors now consult AI before placing trades. Meanwhile, the AI trading platform market itself is on track to grow from $11.23B in 2024 to $33.45B by 2030 at a 20% CAGR.

Do Machine Learning Trading Signals Actually Work?

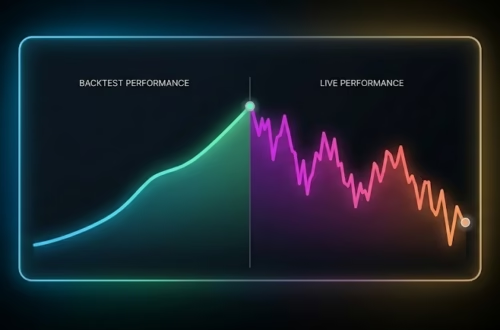

Here’s where I have to puncture the narrative. The most rigorous study ever published on real trading algorithms tracked 888 of them over real out-of-sample periods on the old Quantopian platform. It found that backtest Sharpe ratios correlate with live performance at an R² below 0.025. Translation: your backtest Sharpe tells you essentially nothing about what the strategy will do once real capital is at risk. That finding still stands. Quant researchers cite it every year because nobody’s managed to reproduce a better result.

ML makes this problem worse before it makes it better. A random forest has thousands of splits. An LSTM has millions of parameters. Each one is another knob you can accidentally fit to noise that won’t repeat.

Add in the population-level data. A full-sample study of every Brazilian day trader between 2013 and 2015 found 97% of those who persisted longer than 300 days lost money, and only 1.1% earned more than minimum wage. FINRA’s own data shows roughly 72% of US day traders end the year with net losses. These aren’t ML traders specifically. They’re the population ML traders are trying to beat. Any honest ML project has to answer a hard question: what edge does my model actually have over the 99% who lose?

Our finding: Nine times out of ten, a trader whose backtest suddenly prints a 3.0 Sharpe hasn’t discovered alpha. They’ve discovered a bug in their cross-validation. The fix is rarely a bigger model. It’s a smaller ego about what the model can actually see.

When Does Machine Learning Trading Signals Actually Beat a Simple Rule?

ML trading signals earn their keep in three specific contexts: non-linear feature interactions, alternative data ingestion, and cross-sectional ranking across large universes. Outside those three, a well-tuned moving average or volatility filter often matches or beats a random forest. The single biggest mistake retail builders make is skipping the simple-rule baseline. If your XGBoost can’t beat “buy when the 50-day crosses the 200-day” on the same universe, you don’t have an ML edge. You have a more complicated loss function.

Think about when ML genuinely helps. The first case is non-linear interaction. Momentum works in low-vol regimes, fails in high-vol regimes, and partially reverses during earnings. A tree model captures that interaction; a linear rule can’t. The second case is high-dimensional alt data like satellite imagery, credit card receipts, shipping manifests, and app install panels. The third is cross-sectional ranking: given 3,000 stocks and 50 features each, which 30 are most likely to outperform next month? ML shines when the hypothesis space is too wide for a human to enumerate by hand.

Does this mean a single-instrument discretionary trader should skip ML altogether? Not exactly. But the default answer should be skepticism. Ask yourself whether your universe is wide enough and your features are non-linear enough to justify the added fragility. If not, stay with rules.

From our workbench: Every profitable retail ML workflow we’ve seen shared two traits. The first was a simple rule baseline the model had to beat before anyone wrote a line of training code. The second was an almost paranoid obsession with data leakage. The projects that blew up skipped both.

What Does It Actually Cost to Build an Machine Learning Trading Signals in 2026?

Three buckets: data, compute, and time. Start with data. Hedge funds are pouring money into alternative data. 95% of them plan to maintain or increase alt-data budgets in 2025, with average annual spending around $1.6M and the largest firms topping $5M across 43 vendors. The broader alt-data market is projected to grow from $14.16B in 2025 to $135.72B by 2030, a 63.4% CAGR. Retail traders obviously can’t match those budgets. But you don’t need to.

Compute is where the math suddenly works for a retail builder. H100 cloud rental has dropped into territory where an afternoon of batch experiments costs less than a decent lunch. That changes the calculus for anyone who couldn’t justify cloud spend twelve months ago.

Time is the real cost, and it’s the one nobody budgets. Annual practitioner surveys consistently show data scientists spend around 45% of their working hours on data prep: cleaning, joining, labeling, and filling gaps. Another study found 73.5% of technical workers spend at least a quarter of their time managing data. If you budget 40 hours to build a signal, 18 of them will disappear into feature engineering before a single model ever runs.

So what does all of this actually cost for a retail builder? Here’s the retail-scale translation. Each tier has a clear edge profile. Pick the smallest one that actually fits your hypothesis.

| Monthly budget | Data stack | Compute | Model types | Best fit |

|---|---|---|---|---|

| $0 | yfinance, EOD Historical Data, Alpha Vantage free | Google Colab free tier, local CPU | scikit-learn, XGBoost baselines | Prototyping on liquid equities, learning the workflow |

| $50 | Polygon.io or Databento intraday bars, tiingo fundamentals | Colab Pro, Paperspace Gradient | LightGBM, small PyTorch nets | Single-instrument live signals, walk-forward validation |

| $500 | One or two alt datasets (sentiment, panels) + clean bars | Nightly H100 cloud GPU, multi-GPU training | Transformers, ensemble stacking, cross-sectional | Multi-asset research, serious alpha hunting |

A week of continuous H100 time at $1.75/hr now costs $294. Most ML trading research doesn’t need a week. It needs an afternoon of batch experiments, which means the whole compute budget for iterating on a signal for a single instrument fits inside what a trader already spends on a data subscription.

How Should a Retail Trader Actually Approach Building an ML Signal?

Follow a capital-preservation framework in five steps: define a measurable edge, benchmark against a simple rule, test on genuinely unseen out-of-sample data, paper trade for at least 60 days, then size live capital with fraction-of-Kelly. Every step exists because a real trader once skipped it and lost money. Including me, more than once. The whole point of the framework is to surface failure before it hits your account.

Step one is the hypothesis. Write down, in one sentence, what inefficiency your model is exploiting. “XGBoost finds patterns” is not a hypothesis. “Post-earnings drift persists for five days in small caps under $500M market cap” is. If you can’t state it in a sentence, the backtest won’t save you later.

Step two is the simple rule benchmark. Build a three-line-of-code version of your hypothesis first. If a moving-average crossover on the same universe returns 0.8 Sharpe and your ML signal returns 0.9 Sharpe, you didn’t find an ML edge. You found 0.1 Sharpe of extra fragility. Kill the project and move on.

Our rule of thumb: Any ML signal that beats its simple-rule baseline by less than 25% on Sharpe after transaction costs is too fragile to deploy. The ML version adds failure modes, and the extra return has to pay for them.

Step three is out-of-sample testing the hard way. Use walk-forward validation with a hold-out period the model has genuinely never seen. Step four is paper trading for at least 60 days, long enough to hit at least one real volatility regime you didn’t plan for. Step five is live sizing at one-quarter Kelly or less until you have 90 days of live track record. Then route the signal through an execution platform that keeps research and live order flow completely separate, so a code bug can’t brick your account at 9:35am.

Where Are Machine Learning Trading Signals Heading Next?

So what should retail traders actually watch for? Three converging trends will shape the next five years: alt data democratization, LLM-assisted research, and regulatory scrutiny. The alt-data market is projected to grow at a 63.4% CAGR through 2030, with hedge funds currently holding 71% of revenue share. As enterprise prices normalize, retail-tier slices of the same datasets will follow. Credit card panels and app install data are already showing up inside $99/month retail products that didn’t exist twelve months ago.

Large language models changed the research loop too. JPMorgan deployed its internal LLM Suite to roughly 250,000 employees in 2025, and the tool picked up American Banker’s 2025 Innovation of the Year award. Retail traders now have the same class of tools. The productivity delta between a trader using GPT-5-class models for feature brainstorming and one who isn’t is real and growing. Regulators noticed as well. Both IOSCO and the IMF published papers in 2025 flagging AI-driven trading as a supervisory priority. Expect disclosure rules and model-risk frameworks in the next 24 months.

Frequently Asked Questions

Can a retail trader build a profitable ML trading signal without a PhD?

Yes, but only with a narrow hypothesis and rigorous out-of-sample testing. Discipline matters more than credentials.

How much capital do I need before building ML signals makes sense?

Under $5,000, costs eat most edges. Between $5,000–$25,000, the signal must beat a simple rule by at least 25% on Sharpe. Above $25,000, economics improve.

Which is better for trading signals — XGBoost, LSTM, or reinforcement learning?

XGBoost and LightGBM win for tabular market data. Use LSTMs or transformers only for raw tick data or text. Start simple.

How long should I paper trade an ML signal before going live?

Minimum 60 days, 90 days is better. You need to survive at least one unexpected volatility regime.

Ready to Put Your Signal Into Production?

Machine learning trading signals work when you respect three things: the capital-preservation framework, the simple rule baseline, and the gap between what a backtest promises and what live markets deliver. The 62% of retail investors now using AI for trading will split into two groups over the next 24 months. The first group will treat ML as a magic oracle. The second group will treat it as a research tool with hard guardrails. Only the second group will still be trading in five years.

When your signal is finally ready for live markets, don’t let execution plumbing kill the edge you earned. PickMyTrade routes TradingView alerts into brokers like Tradovate, NinjaTrader, and Interactive Brokers without the $25–$589/month CME API fee stack, so you can focus on the research loop instead of the order pipe. Start with a free account and wire up your first ML-driven alert in under five minutes.

Disclaimer:

This content is for informational purposes only and does not constitute financial, investment, or trading advice. Trading and investing in financial markets involve risk, and it is possible to lose some or all of your capital. Always perform your own research and consult with a licensed financial advisor before making any trading decisions. The mention of any proprietary trading firms, brokers, does not constitute an endorsement or partnership. Ensure you understand all terms, conditions, and compliance requirements of the firms and platforms you use.

Also Checkout: Automate TradingView Indicators with Tradovate Using PickMyTrade