DeepSeek V4 vs Claude Opus 4.6 trading is now the most important comparison in AI-driven markets.

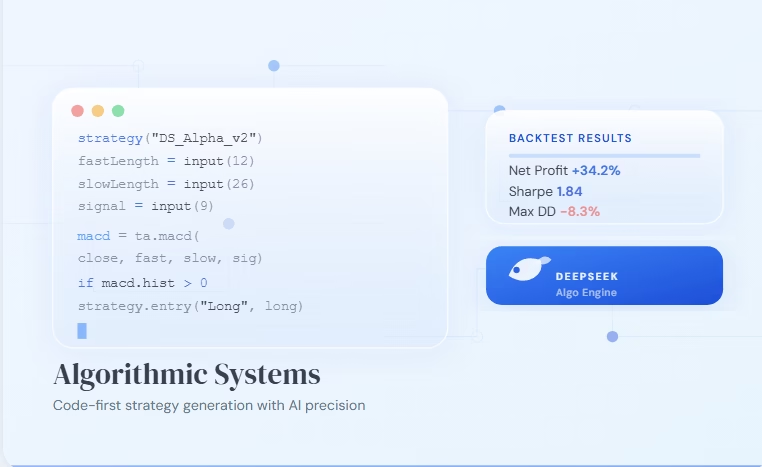

For two years, the trading world followed one simple rule. If you wanted an AI that writes solid Pine Script, reads your chart images, and works smoothly with TradingView, you paid for Claude. In the DeepSeek vs Claude trading debate, this shift is no longer theoretical.

The 58‑page DeepSeek‑V4 technical report has now torn up that rulebook. However, what replaces it is not a clean win for anyone. The balance of power has shifted decisively towards quants, algo traders, and anyone who works mostly with code.

DeepSeek‑V4 is the Daredevil of quantitative finance: blind to charts, yet hyper‑aware of the market’s mathematical heartbeat.

After reading every page and cross‑checking the benchmarks against Claude Opus 4.6, I found an uncomfortable truth. DeepSeek‑V4 is now the mathematically stronger model for quantitative finance. Meanwhile, Claude still runs the point‑and‑click, chart‑based trading workflow. The crown has split, and for a growing number of traders, the old king is simply too expensive and too closed.

What DeepSeek-V4 Just Changed for Quants

Two numbers from the paper completely rewrite the cost of AI‑powered trading:

- A 1‑million‑token context window that needs only 27% of the computing power of the last generation. The smaller Flash model goes even further — it needs just 10%.

- Memory requirements (KV cache) cut to one‑tenth of the earlier DeepSeek‑V3.2. As a result, you can now run long‑context inference cheaply on a single high‑end GPU or on your own server.

On top of that, the MIT open‑source license gives you something no proprietary model can match. You get unlimited, private, million‑token reasoning that never leaves your own setup. For quant firms guarding valuable alpha, that privacy alone is worth more than any benchmark score.

This DeepSeek vs Claude trading comparison clearly shows where each model dominates.

Head‑to‑Head Benchmark: DeepSeek‑V4‑Pro‑Max vs Claude Opus 4.6

How the Comparison Works

All results below come from the official DeepSeek‑V4 evaluation tables and available Opus 4.6 scores. Where I used a third‑party source for an Opus number, I have noted it.

The Numbers That Matter Most for Traders and Quants

| Benchmark (what it measures) | DeepSeek‑V4‑Pro‑Max | Claude Opus 4.6 | Winner | Why this matters for your trading |

|---|---|---|---|---|

| LiveCodeBench (Pass@1) | 93.5 | 88.8 | DeepSeek | Real‑world coding contests. Directly linked to building reliable Python backtesting code. |

| Codeforces Rating | 3,206 | 3,168 | DeepSeek | Competitive programming skill. V4‑Pro‑Max ranks 23rd among human coders. |

| SimpleQA Verified (Pass@1) | 57.9 | 45.3* | DeepSeek | Factual accuracy. Helps stop hallucinations when you feed in FOMC minutes or COT reports. |

| HMMT 2026 Feb (Pass@1) | 95.2 | 94.7 | DeepSeek | High‑school math contest score. Shows the raw math thinking needed for options pricing and factor models. |

| Apex Shortlist (Pass@1) | 90.2 | 85.9 | DeepSeek | Elite math benchmark. Highly relevant for quant researchers formalising new strategies. |

| MMLU‑Pro (EM) | 87.5 | 91.0 | Opus | Broad academic knowledge. Opus still leads in wide‑ranging world knowledge. |

| GPQA Diamond (Pass@1) | 90.1 | 94.3 | Opus | Graduate‑level reasoning. Opus has a small edge in deep scientific problems. |

| MRCR 1M (MMR) | 83.5 | — (Opus 4.5 scored 76) | DeepSeek | Needle‑in‑a‑haystack test over 1 million tokens. V4‑Pro handles this easily. |

| Cost per 1M input tokens | ~$0.27 | $15.00 | DeepSeek | 55 times cheaper. Large‑scale backtests and Monte Carlo runs become affordable. |

| Self‑hosting / Privacy | MIT license, full weights | None (cloud‑only) | DeepSeek | Zero exposure of your strategy to third parties. Total IP protection. |

| Vision / Chart Screenshots | No | Yes | Opus | The one feature that keeps many discretionary traders on Claude. |

| TradingView MCP Integration | No | Yes | Opus | Agentic workflow: write, inject, compile, and arm alerts in one go. |

Opus 4.6 SimpleQA score of 45.3 is from third‑party data; DeepSeek’s own paper reports 57.9 for V4‑Pro‑Max.

Which Model Should You Use? A Trader‑by‑Trader Breakdown

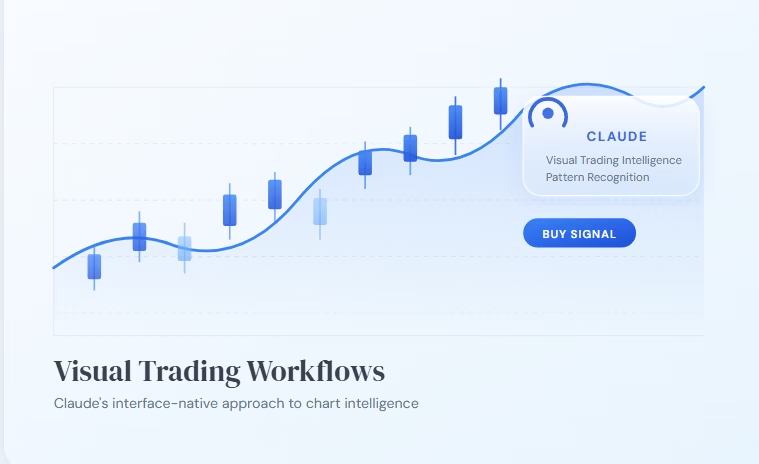

The Discretionary Prop Firm Trader

In this workflow, you look at charts to spot zones and patterns. You want an AI that can see what you see and instantly generate an alert. For these tasks, vision and smooth TradingView integration are everything.

Verdict: Claude Sonnet 4.6 or Opus 4.6. DeepSeek‑V4 cannot read images. Therefore, it cannot join your main workflow. Moreover, Claude’s TradingView MCP tool gives you a live agent that can control your charts directly. DeepSeek simply offers nothing similar right now.

The Quant Researcher

Quants deal with data: years of price history, order‑book snapshots, and economic time series. They need a model that writes flawless vectorised pandas code, derives GARCH variants, or builds a Kalman filter without inventing libraries.

Verdict: DeepSeek‑V4‑Pro‑Max. The numbers are clear. On LiveCodeBench (93.5 vs 88.8), Codeforces (3,206 vs 3,168), and formal math (Apex Shortlist 90.2 vs 85.9), DeepSeek is the mathematically sharper tool. In addition, its 55× cheaper inference and self‑hosting ability make it the obvious choice for high‑volume research and data‑heavy tests.

The Algo Developer

Algo developers build production trading systems. They need clean, bug‑free code that talks to REST and WebSocket APIs, manages order state, and respects rate limits. Latency, privacy, and the ability to iterate fast all matter greatly.

Verdict: DeepSeek‑V4‑Pro (primary) with occasional Claude Opus (code review). DeepSeek’s raw coding strength and massive cost advantage make it ideal for generating and refining bot logic. Since you can self‑host it, you can embed the model inside your own pipeline without sending any private logic outside. You could then use Opus as a second‑opinion reviewer for the most critical parts — though at $15 per million tokens, you probably won’t use it for everyday work.

The Full‑Stack Trader

Many traders take courses, extract rule sets, build strategies, and then fire live alerts. They want an AI that can absorb an entire curriculum and produce working Pine Script.

Verdict: Split stack. DeepSeek‑V4’s 1‑million‑token memory is perfect for taking in 40 hours of lecture transcripts and pulling out the system. However, once you have your strategy in text form, you still need Claude to inject it into TradingView and handle the visual side. Use DeepSeek for the heavy text work, then switch to Claude Code for the final live setup.

Where Opus 4.6 Still Outperforms DeepSeek‑V4

Three Areas Where Opus Leads

- Vision and chart interpretation: Opus remains the only model that can look at a candle chart and directly answer a question like “Is this a valid supply zone?” If your edge depends on visual pattern recognition, DeepSeek cannot help you.

- Agentic MCP ecosystem: Claude Code combined with TradingView MCP lets you control your whole workflow by voice or text command. DeepSeek has no matching tool. This is a tooling gap, not an intelligence gap, but it keeps Claude deeply embedded in the retail trader community.

- MMLU‑Pro and GPQA: Opus still scores higher on broad academic knowledge and graduate‑level science. Therefore, for complex macro analysis that calls for wide‑ranging knowledge, Opus may offer a slightly more reliable context.

Why These Advantages May Not Last

Here is the uncomfortable truth for Anthropic. These remaining advantages are serving a shrinking group of traders. The fastest growth in trading AI is not coming from chart watchers. Instead, it is coming from quantitative researchers and algo developers who need raw coding and math power. On that field, DeepSeek‑V4 has already won.

Final Scorecard: DeepSeek‑V4 vs Claude Opus 4.6 for Trading

| Criterion | DeepSeek‑V4‑Pro‑Max | Claude Opus 4.6 | Best for most traders |

|---|---|---|---|

| Live coding accuracy | Wins | Loses | DeepSeek |

| Competitive programming | Wins | Loses | DeepSeek |

| Formal math and theorem proving | Wins | Loses | DeepSeek |

| Factual precision (SimpleQA) | Wins | Loses | DeepSeek |

| Cost efficiency | Wins (55× cheaper) | Loses | DeepSeek |

| Self‑hosting and privacy | Wins (MIT) | Loses | DeepSeek |

| Broad academic knowledge | Loses | Wins | Opus |

| Graduate‑level reasoning | Loses | Wins | Opus |

| Code review and agentic debugging | Loses | Wins (MCP) | Opus |

| Chart screenshots and vision | Loses | Wins | Opus |

| TradingView MCP injection | Loses | Wins | Opus |

| Long‑context retrieval (MRCR 1M) | Wins | — (no official Opus 4.6 score) | DeepSeek likely |

For most users, the DeepSeek vs Claude trading decision depends on workflow, not hype.

If you are a quant, algo developer, or heavily data‑driven researcher, DeepSeek‑V4‑Pro‑Max is now the better model. It is faster, far cheaper, more private, and measurably stronger at the math and code that define your work.

If you are a discretionary trader who depends on chart visuals and the TradingView interface, Claude Opus 4.6 still holds an ecosystem lead that DeepSeek cannot yet match.

For everyone else, the smart move is to split the stack.

DeepSeek has ended the era of using just one AI for all trading tasks. The comfortable habit of “just use Claude” is now gone for good.

Ready to Automate? Your Next Moves

The DeepSeek vs Claude trading shift is ultimately about choosing the right workflow for your edge. Use DeepSeek for math, code, and research, and use Claude for visual workflows and final execution.

If you already have a TradingView strategy or indicator and want to automate it, PickMyTrade lets you connect your alerts directly to your broker and execute trades automatically. As always, no tool guarantees profits. Combine automation with disciplined risk management, proper backtesting, and continuous improvement. Grab the 5-day free PickMyTrade trial: https://pickmytrade.com

Disclaimer: This content is for informational purposes only and does not constitute financial, investment, or trading advice. Trading and investing in financial markets involve risk, and it is possible to lose some or all of your capital. Always perform your own research and consult with a licensed financial advisor before making any trading decisions. The mention of any proprietary trading firms, brokers, does not constitute an endorsement or partnership. Ensure you understand all terms, conditions, and compliance requirements of the firms and platforms you use.

Source Links

- DeepSeek‑V4 Official HuggingFace Collection: huggingface.co/deepseek-ai/DeepSeek-V4-Pro

- Claude Opus 4.6 Announcement: anthropic.com

- TradingView MCP (Jackson): github.com/LewisWJackson/tradingview-mcp-jackson

- TVControl MCP Server: github.com/TheRealSeanDonahoe/tvcontrol

- SWE‑bench Verified Leaderboard: llm-stats.com

- DeepSeek‑V4 MRCR 1M Analysis: developer.aliyun.com/article/1731431

You May also Like:

Best Algo Friendly Prop Firms 2026: Complete Comparison Table

Vibe Coding Your TradingView Strategy: Build Pine Script with AI

Monte Carlo Trading Simulation: Test Strategy Robustness

Diversified Bot Strategy: The 3-Bot Portfolio for 2026 Profits

Is DeepSeek‑V4 truly that much better at math and coding than Opus?

Yes. The benchmarks speak for themselves: 93.5 vs 88.8 on LiveCodeBench, 3206 vs 3168 Codeforces rating, and 90.2 vs 85.9 on Apex Shortlist. These gaps are real and reflect genuine coding and problem‑solving skill.

Can DeepSeek‑V4 read chart screenshots?

No. It is a text‑only model. If your workflow needs visual chart analysis, Claude remains your best option.

Can I self‑host DeepSeek‑V4 for private trading strategies?

Absolutely. The models are MIT‑licensed and the full weights are on HuggingFace. You can run them on your own hardware with zero data leakage.

Which model is better for building a TradingView Pine Script alert system?

For writing the Pine Script itself, DeepSeek‑V4‑Pro is now very competitive. However, to inject it directly into a live chart and test it, you need Claude Code with TradingView MCP. The best approach is to use DeepSeek for the code and then switch to Claude for the final integration.

What does the cost difference actually mean in practice?

With DeepSeek‑V4‑Flash at about $0.10 per million tokens, you can run roughly 150 times more prompts for the same price as a single Opus 4.6 call. That turns a single backtest into a full Monte Carlo simulation across 10,000 parameter sets.

Who wins for prop firm challenge design?

For multi‑constraint reasoning (daily loss limit, max drawdown, minimum trading days), Opus 4.6 is slightly more consistent. However, by combining DeepSeek’s math ability with careful prompt engineering, you can close that gap while spending far less.